This spring, U.S.-based video processing pioneer SightLine Applications announced its acquisition of Athena AI, an Australian defense technology firm specializing in computer vision for edge-AI applications in ISR, counter-UAS, and battlefield autonomy.

The merger is poised to significantly accelerate innovation under AUKUS Pillar 2, which focuses on trilateral AI and autonomy cooperation among Australia, the United Kingdom and the United States.

Athena’s AI stack is already battle-tested, having been used in dynamic targeting scenarios by Australian Joint Terminal Attack Controllers (JTACs). Now, integrating into SightLine’s low-latency video platforms, the combined company aims to deliver advanced vision-based autonomy in small form factors, ideal for drones, sensors and robotic platforms across the allied defense ecosystem.

From Australia’s experimental ranges to U.S. defense integration labs, the merged roadmap underscores a shared vision: building trusted and ultra-capable AI that empowers warfighters in seconds—not minutes. As AUKUS Pillar 2 accelerates, expect Athena-SightLine to play a central role in shaping the AI-powered battlefield of the future.

Inside Unmanned Systems spoke with Stephen Bornstein, co-founder of Athena AI, now part of the SightLine executive team, to unpack how the merger is shaping product strategy, enhancing tactical edge performance, and establishing new benchmarks in autonomy.

IUS: How do you see the integration of Athena’s AI stack into SightLine’s video processing platform enhancing the capabilities of both companies?

BORNSTEIN: The integration of Athena and SightLine is a perfect match. We are leveraging 18 years of low-latency video processing technology—built to approved military messaging standards—with Athena’s advanced AI analytics capabilities. Our models will be compatible with all the new chipsets SightLine is producing.

IUS: What were the defining strategic or technological factors that led you to pursue this partnership with SightLine, and what does it unlock that wasn’t previously possible for Athena?

BORNSTEIN: The technologies were complementary—SightLine’s extensive video processing capabilities and Athena’s AI strengths. Additionally, we now have a combined presence in the USA and Australia, representing two of the three AUKUS countries. Finally, each company brought a diverse range of customers, creating large opportunities to add new value to our respective portfolios.

IUS: How are you approaching the product roadmap now that you’re overseeing a combined portfolio across both organizations?

BORNSTEIN: We are end-user centric. We start with the effect the user is trying to achieve—be it low size, weight and power (SWaP) for small platforms or multi-feed autonomous detection and classification for sensor networks. We also factor in OEM feedback and continue internal R&D to improve methods.

IUS: Athena’s vision-based AI operates in some of the most demanding mission areas—what are the core design principles that allow it to perform reliably at the tactical edge?

BORNSTEIN: We focus on high-quality data and network assurance. We work with world-leading tools like LatticeFlow for model testing and collaborate with Australia’s Defence Science and Technology Group (DSTG) to enhance lawful decision-making and rapid response.

IUS: From ISR to fire control, how do your AI models manage ambiguity or uncertainty in real time, especially under adversarial conditions?

BORNSTEIN: We train models on a wide range of adverse environments. Athena has over 10 model types for different use cases. We validate on unseen data and use methods to minimize false positives, ensuring reliable target locks.

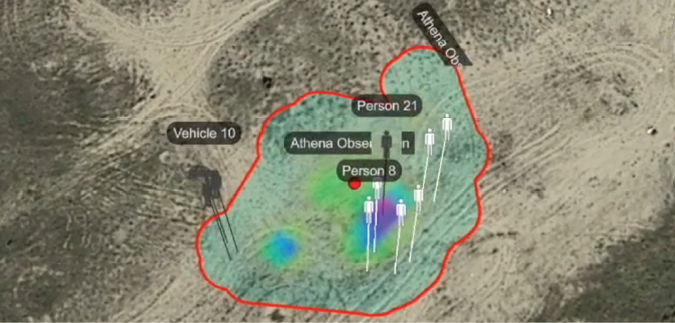

IUS: Could you walk us through a recent scenario or exercise where Athena’s AI significantly improved decision speed or accuracy on the battlefield?

BORNSTEIN: DSTG assessed our system in a dynamic targeting exercise with JTACs. The scenario included civilians and protected persons changing roles dynamically. The results showed that using Athena led to both faster and more lawful decisions.

IUS: Counter-UAS is one of your mission focus areas—how do you train your models to distinguish between threats and non-threats in complex operational environments?

BORNSTEIN: Data is king. We use both real-world and synthetic datasets and stress-test models under fog, rain, occlusion, and distortion. Equally important is ensuring non-threats like civilian transport planes are well represented and correctly labeled during training.

IUS: What’s your philosophy on how much autonomy should be built into lethal decision support systems, and where do you see the balance between automation and human control evolving?

BORNSTEIN: Human authorization remains essential. Systems like SMART 155 and Phalanx already operate semi-autonomously, but Athena enables “wave-off” capabilities—for example, if a civilian enters a strike radius. Sophisticated vision helps, but humans must authorize knowing risks and capabilities.

IUS: With Athena’s deep roots in Australia and SightLine’s expanded presence in the U.S., how does the partnership align with the AI and autonomy objectives of AUKUS Pillar 2?

BORNSTEIN: This was a key driver. AUKUS enables effective sharing of technology, and between SightLine and Athena, we’re now better positioned to support our allied customers across Pillar 2 AI and autonomy goals.

IUS: What are some of the most exciting joint capability areas between Australia and the U.S. where you believe Athena-SightLine can make an immediate impact?

BORNSTEIN: Ultra-low latency, low SWaP computer vision. With our combined expertise, we’re building onboard video analytics for very small platforms without compromising power or weight—ideal for forward-deployed systems.

IUS: How is Athena evolving its AI beyond object detection and recognition—are you exploring higher-order capabilities like intent inference or predictive modeling?

BORNSTEIN: Yes, we’re exploring temporal reasoning, geospatial analytics, optimized decision support from multiple inputs, and multi-sensor fusion. Detection and classification are just the beginning.

IUS: What role do simulation environments or synthetic data play in scaling and testing your AI under rare or high-risk scenarios?

BORNSTEIN: They help fill gaps in underrepresented data types and allow us to tune for accuracy, latency and robustness. For mission-critical applications like terminal guidance and slew-to-cue, latency is everything.

IUS: Are you working with any defense partners on establishing standards or benchmarks for ethical deployment of AI in mission-critical systems?

BORNSTEIN: Yes, we’ve worked with Trusted Autonomous Systems, DSTG, and the International Weapons Review. These collaborations help ensure our AI complies with standards like Article 36 of the Geneva Convention.

IUS: Now that Athena is part of a broader global team, what excites you most about the future of AI-driven autonomy in defense—and where do you see the biggest opportunities for innovation in the next 3 to 5 years?

BORNSTEIN: What excites me most is how powerful AI has become on such small platforms. When we started, we could run a detector at 1 fps on 720p video. Now we’re processing 4K video at 5 fps on the same-sized hardware. That’s more coverage, more insight, and real decision support at the edge.