AERO

Through their Autonomous Exploration Rover, or AERO, a team of engineers at Worcester Polytechnic Institute are poised to enhance human exploration capabilities on other planets.

While teleoperated robots have given researchers access to remarkable images and scientific data of distant planets over the years, AERO is designed to reach places and provide information these exploration robots simply can’t. This next generation space robot was developed to participate in the NASA Sample Return Robotic Centennial Challenge in 2013, and is designed to advance the next evolution in space exploration.

Here’s an overview of what it took to develop AERO as well as results from the challenge. If you’d like a more in-depth, inside look at AERO, please download the team’s white paper, “Hierarchical Navigation Architecture and Robotic Arm Controller for a Sample Return Rover.”

About AERO

This sample search and return planetary rover features a navigation and vision system that allows it to detect, classify and retrieve objects—its primary goal during the NASA challenge. Engineers implemented a modified driving algorithm with three hierarchical navigation layers, which gave AERO increased reliability.

AERO is made of a differential drive four-wheeled mobility platform. The rover also comes equipped with a 6-DOF manipulator, which was used as an arm to collect the samples. The commercially available system provided AERO with the needed flexibility to pick up samples of different sizes and place them on different locations on its top plate.

The Task

For the challenge, engineers needed AERO to navigate a large outdoor area, find and locate various samples and then return those samples to the starting platform.

The samples fell into one of three categories: easy, medium and hard. The easy samples were fully defined in terms of what physical characteristics AERO needed to look for, the medium samples were defined in broad terms to describe general size, color and texture, while the hard samples were vaguely defined and engraved with a small, unique marking.

To locate the samples, AERO used a combination of data from a fixed, forward-facing stereo vision system, LIDAR, and KVH’s 1750 inertial measurement unit. This allowed the rover to implement a simultaneous localization and mapping, or SLAM, algorithm to mark the areas to search and then return to the starting platforming at the end of the competition. AERO’s second panning stereo vision system located and identified samples through object classifier and texture-based algorithms.

The navigation and vision system the team created allowed AERO to avoid obstacles, navigate toward the samples, and then successfully recognize and retrieve them.

“At a conceptual level, the system is designed with hierarchical control layers,” according to the white paper. “The supervisor, global planner, and local planner layers handle the state of the robot, current path to the target, and avoiding obstacles respectively.”

The Sensors

Precise navigation and vision systems were key to AERO successfully locating the samples. The LIDAR, the KVH 1750 IMU, a mast-mounted stereo vision system, and wheel encoders were the main sensors responsible for making that happen.

The AERO team opted to use a LMS151 LIDAR from SICK because of its 50 meter maximum range and excellent outdoor performance. The LIDAR directly fed the SLAM algorithm during the challenge by accurately providing ranging data to trees and man-made features in the environment.

The KVH 1750, a high-performance fiber optic gyro-based IMU, provided AERO with accelerations and angular velocities that enabled the rover to dead reckon when no good LIDAR features were available. Engineers selected this fiber optic ring gyro because of its stability and very low drift rates, making accurate dead-reckoning possible for extended times–even without absolute positioning information from the LIDAR.

The mast-mounted cameras periodically panned and located trees from the scene to help localize AERO, while wheel odometry from the motor encoders fused with all the available data in an extended Kalman Filter (EKF) to localize the robot better than any sensor could do on its own.

How AERO Detected and Classified the Samples

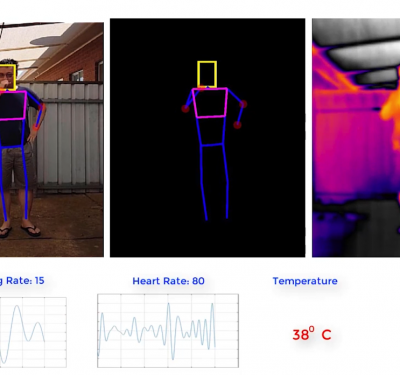

AERO relied on its computer vision system to detect and classify samples during the challenge. Its top mast cameras identified anomalies in the grass that could be samples, and then marked them on a probabilistic map on the robot. AERO inspected each potential sample close-up using its fixed, front-mounted vision system.

The fixed forward facing vision system then extracted the location, major axis, and bounding box of each sample to help determine a suitable path for AERO to retrieve the sample. The arm controller node helped AERO determine the type of grip to use based on the object classification and its orientation.

The Results

The navigation and vision algorithms necessary to complete this challenge were implemented through AERO’s Robot Operating System (ROS) and OpenCV in Ubuntu Linux 12.04. This flexible, intuitive software made it easy to collect data for visualizing and testing.

During the challenge, AERO successfully avoided both stationary and moving objects while completing the task. During benchtop testing, AERO successfully retrieved samples multiple times.

AERO has the potential to take researchers where they’ve never been, and the Worcester Polytechnic Institute engineers are continuing to test this planetary rover outdoors, including in locations with rough terrain similar to extraterrestrial environments.

If you’d like an even more in-depth look at what went into developing AERO, please download the white paper here.