AutonomouStuff is integrating GNSS and an Inertial Measurement Unit (IMU) into their custom product for developers. The company combines antennas, firmware, inertial augmented systems, and software. Some applications include highway automation that includes lane centering, according to the company.

Whether or not the autonomous vehicle market takes off in a few years will depend on not only sensor prices dropping dramatically, but what combination of systems will be used by developers.

The commoditization of perception sensors will allow the self-driving car industry to experience a revolution similar to the growth of computers in the 1970s and 1980s, said Bobby Hambrick, AutonomouStuff CEO. “The pricing of sensors will significantly decrease, for production and research and development, starting now and much more in the upcoming two to three years,” he said.

However, given the different autonomous vehicle driving conditions, Hambrick said he wouldn’t give higher importance to one sensor over another. “Each sensor modality has its own tradeoffs. They will be fused together in order to provide truly robust automation,” he said. “Many sensor technologies are used for fully autonomous driving because no one sensor is immune to all conditions. Of course, camera, LiDAR and radar are mostly used for perception, but there are many other inputs necessary for full autonomous driving.

Hambrick cites data input for localization, traffic conditions, risk map data, driver mood and behavior as additional important autonomous vehicle sensors. He sees the traditional mantra of ‘smaller, faster, cheaper and easy-to-manufacture’ becoming the norm in autonomous vehicle sensor technology.

One of the major sensors for autonomous vehicles, LiDAR, is coming down in price, which will be attractive to developers. “LiDAR sensors are higher priced today due to limited unit volumes, usually less than 10,000 units,” said Mike Jellen, Velodyne’s president and COO. “That said, per-unit costs for LiDAR sensors can be quoted today at much more attractive prices when increased to automotive production volumes. Essentially, carmakers committing to production programs are receiving great LiDAR sensing pricing.”

LiDAR’s continued use as one of the main autonomous sensors continues as sensors create 3-D images at 200 meters vs. cameras that, in relative terms, have limited range and field of view, Jellen said. “Furthermore, cameras are susceptible to changing light conditions and shadows. This could be problematic for commuters traveling during dawn and dusk, as an example,” he said. “Meanwhile, LiDAR sensors operate well in most lighting conditions, and in fact, LiDAR sensors operate well without any lighting at all.” In August, Velodyne received a $150 million investment from Ford and Chinese Internet search engine giant Baidu.

GNSS Sensors Don’t Get the Hype

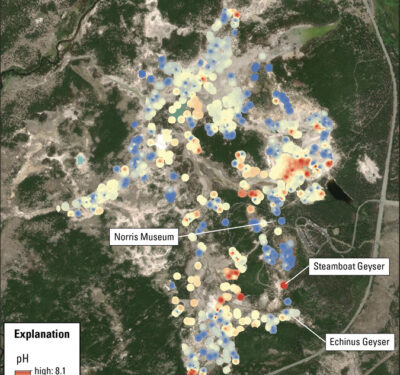

The main navigation and guidance sensor for any autonomous system starts with its GNSS receiver. However, compared to cameras, LiDAR, radar and other sensors, GNSS doesn’t get the publicity in most published reports, said Jon Auld, NovAtel director, safety critical systems.

“I may be biased here, but I think some in the industry are underestimating the GNSS component as a critical sensor. At present, camera and LiDAR technology are getting a lot of attention, but they have limitations in their capability for high-precision, absolute positioning,” he said. “GNSS offers worldwide coverage, all weather operation, the ability to provide positioning between two vehicles that cannot see each other and positioning when road and sign markings are not visible. The public will expect an autonomous car to just work and they will not be content unless the overall solution is available at a very high rate.”

As with any new technology, autonomous vehicles will go through the same price pressures, Auld said. “Initially, the systems will be installed on higher-end platforms where the initial costs of early systems can be tolerated. As the technology matures and the volumes of cars with this capability increase, there will be a natural migration to lower overall system costs,” he said. “Since an autonomous driving system is a fusion of several sensors, the lower the cost of the individual sensors, the lower the overall price. These systems are all about sensor fusion at the [electronic control unit] level where many separate inputs from a variety of sensors are brought together to provide an overall solution.”

NovAtel’s interest in autonomous vehicle development dates to the Defense Advanced Research Projects Agency (DARPA) Grand Challenge in the California desert more than a decade ago. Earlier this year, the company formed a safety critical systems group to leverage its aviation technology experience to meet requirements for driverless cars.

Inertial Measurement Units Part of Autonomous Sensor Family

Sensor companies are attempting to help automakers achieve full autonomous driving, or Level 5 on the Society of Automotive Engineers (SAE) and National Highway Transportation Safety Administration (NHTSA) driving scales. These guidelines basically say all functions, including driving, will be handled by the vehicle, not a driver.

Such companies as Morton, Ill.-based AutonomouStuff, which combines several sensor technologies in its autonomous research and development platform; and NVIDIA, through its PX 2 module, offer driverless car developers technology options to accelerate testing.

In the meantime, automakers are taking different technological approaches to sensor integration, some more minimalist than others, said Jay Napoli, KVH Industries vice president, sales and marketing. “Everyone is aware of the way GNSS can fail when a signal disappears in certain weather conditions. LiDAR, also cannot identify shapes in snow, rain, or even mist and fog,” he said. “Inertial systems can’t be jammed or fooled because they measure the actual motion of a vehicle. However, one drawback is that inertial units will have drift, which has to be corrected.”

Napoli said that a successful autonomous vehicle will have multiple suites of sensors including GNSS, radar, inertial systems, and cameras. “They seem to be the ones who can achieve SAE Level 5 [results],” said Napoli, whose company offers a fiber optic gyro (FOG) based inertial system for self-driving cars.

FOG systems are part of an inertial measurement unit (IMU), along with accelerometers, which provide angular rate and acceleration data to track a car’s position.

Another company that offers inertial units for autonomous vehicles, Sensonor, said a major sensor challenge has been to measure feedback when a car turns. “We have been working with every car manufacturer who are building autonomous cars in the last two years,” said Hans Richard Petersen, Sensonor vice president, sales and marketing. “Through that testing, we are finding that the only way to measure feedback, when a car turns, is to have a very good gyro. It’s a picture that tells a car to do a specific turn within a few centimeters,” he said.

Petersen believes FOG systems are too expensive for autonomous vehicles. “We have been testing sensors for autonomous vehicles for 10 years. “The cost of fiber optic systems make mass production of autonomous vehicles not possible,” he said.

Market May Decide Sensor Technology…

Regardless of what sensor is more dominant over another because of performance or price, automakers won’t be a neutral player in the process, said Scott Frank, Airbiquity vice president of marketing.

“We believe leading automakers will actually accelerate autonomous investments to compete aggressively and mitigate the risks of market share and revenue loss,” he said. “Twelve months ago, it may have seemed like traditional automakers weren’t moving fast enough to compete with disrupters like Tesla and Google, but the tide has clearly turned as evidenced by a slew of recent automaker announcements about autonomous related acquisitions, investments, and agreements. In addition, [they are] revealing autonomous technology development initiatives that have been happening behind the scenes for many years.”

Federal and local regulations also could impact autonomous vehicle sensor development, Frank said. “It’s clear the development and deployment of autonomous technology is moving ahead of lawmakers’ ability to draft and pass regulations pertaining to it, and this will increasingly impact the deployment speed for full autonomous vehicles as defined by SAE Level 5,” he said.

Paul Drysch, RideCell vice president, new mobility business, agrees that government regulations may hamper the technological development of autonomous vehicles, which may drive companies to find easier implementation overseas. “You need city, county, state and federal laws to change. This will happen in a hodge-podge way, so I bet the first truly driverless vehicles will launch outside of the U.S.,” he said.