At AUVSI Xponential 2025 in Houston, the exhibit hall was humming with talk of autonomy, AI, and the pressing need to operate in contested environments. For Teledyne FLIR, those buzzwords aren’t distant aspirations—they’re practical engineering challenges being solved today.

We sat down with Art Stout, a subject-matter expert at Teledyne FLIR, who walked me through the company’s latest work in thermal imaging, embedded AI, and flight management. What emerged was a story about more than just cameras. It was about convergence—sensors, algorithms, and compute power coming together to enable unmanned systems to see, decide, and act in real time.

From Cores to Context

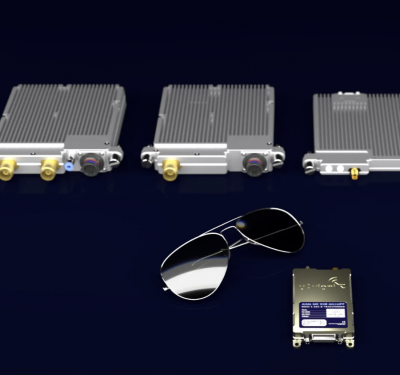

Teledyne FLIR came to Xponential with a portfolio that underscores the breadth of its imaging heritage. On one end, there are cooled thermal cores, high-performance sensors designed for long-range, high-sensitivity detection. On the other, compact Boson microbolometer uncooled cores, small enough to slip onto a hand-launched UAV.

But Stout was quick to emphasize that the real innovation is not just in the optics. “We are now expanding into image signal processing and AI on embedded platforms such as the Qualcomm Dragon series processors,” he said. “That allows us to extend imaging and offer advanced image processing and decision support.”

In other words, the sensors no longer just deliver pixels—they deliver context.

“There’s a convergence happening between sensors, algorithms, and the intelligence to run on-device. That’s where the real breakthroughs are.”

—Art Stout, Teledyne FLIR

Thermal Vision, Tactical Advantage

For unmanned platforms, the first-order problem is often visibility. Drones operating at night, in fog, or under canopy don’t have the luxury of waiting for better conditions. Thermal imaging fills that gap.

“From an imaging point of view, it’s about low-light or nighttime operations,” Stout explained. “The ability to add nighttime capabilities to drones is a big demand.”

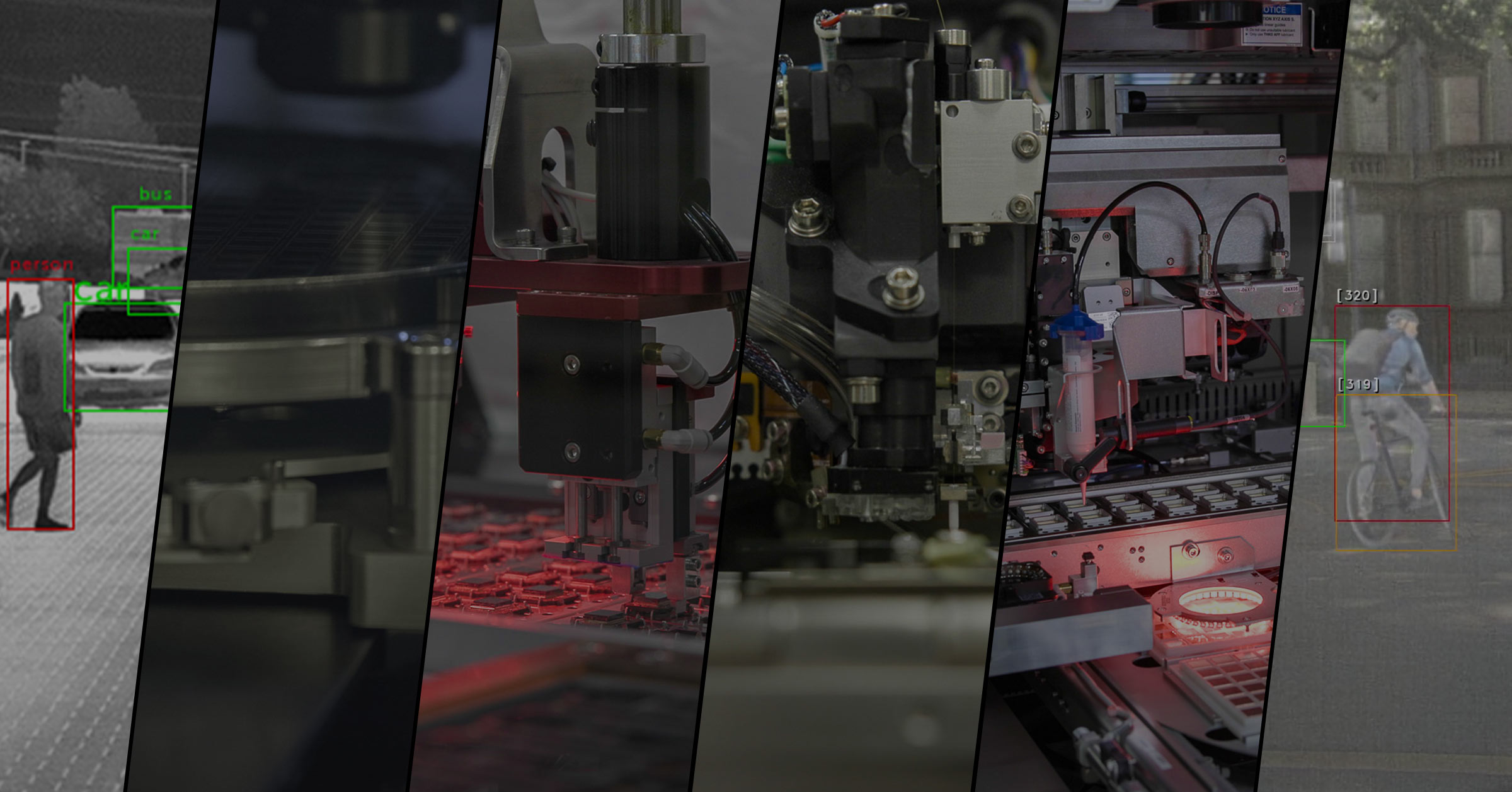

It’s a demand that extends far beyond surveillance. In the realm of loitering munitions and weaponized platforms, thermal sensors become the eyes feeding into automatic target recognition (ATR) software. Detection and tracking algorithms rely on those inputs to classify and engage with precision—whether the mission is counter-UAS defense, perimeter security, or beyond-line-of-sight reconnaissance.

FLIR’s Portfolio at a Glance

- Cooled Cores – High sensitivity, long-range detection.

- Boson Uncooled Microbolometers – Compact, lightweight, small-UAS ready.

- Embedded AI – Qualcomm Snapdragon and other processors for on-board analytics.

- ATR Software – Automatic target recognition using thermal inputs.

- Flight Management Layer – Middleware between perception and autopilot.

Navigation Without GPS

As sophisticated as unmanned systems have become, their dependence on GPS remains an Achilles’ heel. Jamming, spoofing, or simple signal denial can cripple an otherwise capable platform.

Teledyne FLIR sees this as a growth frontier. Stout described visual navigation, a method that pairs thermal or EO imagery with machine learning and inertial measurement to locate a system without satellite signals.

“Regardless of whether it’s a loitering munition or a surveillance drone, the need to know your location is common across every platform,” he said. “Doing that in a GPS-denied environment is a critical capability.”

This isn’t speculative. Visual navigation is being fielded today, and thermal sensors are a core enabler. In environments where visible light falters, thermal contrast provides the features machine learning models need to maintain situational awareness.

Autonomy Beyond Line-of-Sight

Of course, sensing and navigating are only part of the equation. True autonomy requires decision-making—software that can translate perception into action.

Here, Teledyne FLIR is bridging the gap between perception and autopilot with a new flight management layer.

“It allows the platform to change its behavior based on a script,” Stout said. “If this, then do that. Loiter if conditions are met. Change priorities based on what it observes.”

In contested environments, RF links may be severed, and GPS may be denied. The only way forward is to push intelligence to the edge—so systems can adapt, reprioritize, and continue missions without human intervention.

“Adding that layer of intelligence to devices is an important capability. In contested environments, there is no RF, there is no GPS.”

—Art Stout, Teledyne FLIR

The Compute Revolution

Underlying all of this is a quiet revolution in compute power. Stout pointed to Qualcomm’s latest embedded processors, which can execute up to 50 trillion operations per second while consuming just 2.5 watts.

That combination of performance and efficiency does more than extend flight times. It tackles one of the most persistent challenges in UAV design: thermal management.

“For most developers here at the show, it’s less about power consumption than about heat,” Stout said. “Running complex software stacks on embedded processors with manageable thermal profiles is a big advance.”

This leap makes it feasible to deploy sophisticated AI workloads—ATR, navigation, mission logic—directly on small unmanned platforms. What once required a server rack now fits in the palm of a hand.

Growth Frontiers

When asked where Teledyne FLIR sees the greatest opportunities over the next few years, Stout didn’t hesitate:

- Perception and Autonomy – Bringing intelligence to sensors across domains.

- GPS-Denied Navigation – Visual navigation as a universal need.

- Autonomous Target Engagement – Precision models for loitering munitions and beyond.

Taken together, these priorities reflect the company’s role not just as a sensor provider, but as an autonomy enabler.

Convergence as the Takeaway

As the conversation wound down, Stout returned to a central theme: convergence.

“There’s a bit of convergence in terms of what’s happening on sensors, what’s happening on algorithms, and then obviously the intelligence to run on-device,” he said.

That convergence is changing unmanned systems at a fundamental level. A decade ago, drones were remote-controlled cameras. Today, they are becoming autonomous agents—capable of navigating denied environments, classifying targets, and making decisions at machine speed.

The takeaway for Xponential attendees was clear: the future of autonomy isn’t about any single breakthrough. It’s about integration—thermal sensors, AI algorithms, and embedded compute power working together on the edge.

As we walked the floor in Houston, it was impossible not to notice how often these themes came up: resilience in GPS-denied environments, autonomy without constant human control, efficiency at the edge. Teledyne FLIR’s booth was a case study in how one company is addressing all three simultaneously.

For unmanned system developers, the implications are profound. Platforms equipped with thermal perception, visual navigation, and adaptive autonomy will not only survive in contested spaces—they will thrive.

And as Stout reminded me, these capabilities are no longer future goals. They are here, now, ready to be integrated into the next generation of unmanned systems.