Manned-unmanned teaming, or MUM-T, is playing a role in military operations across domains and borders.

In combat, the battlefield is constantly evolving and becoming increasingly complex. Warfighters are no longer dealing with simple tank-on-tank or infantry-versus-infantry scenarios. Cyberelectronic warfare and multidomain threats are among the factors changing the landscape, and forces need every advantage possible to overtake the enemy.

Manned-unmanned teaming, also known as MUM-T or advanced teaming, has the potential to introduce more dilemmas to adversaries and make armed forces more effective, said Maj. Cory Wallace, robotic combat vehicle lead for the U.S. Army’s Next Generation Combat Vehicles Cross Functional Team (NGCV CFT).

With both manned and unmanned assets working together toward a common goal, critical information can be delivered to the commander faster than ever before—and the commander who makes the most informed decision the fastest wins.

Instead of trading contact with the enemy for information, human and robots work together to collect and deliver that information, Wallace said. This decreases the risk to warfighters and provides a higher degree of response in a variety of scenarios.

“If soldiers want to deploy electronic warfare, for example, they have to work through several different tiers of command to get down to the point of need,” he said. “With manned-unmanned teaming you can do that seamlessly because the systems are co-located.”

Over the last few years, Wallace’s team has completed various demonstrations looking at how MUM-T can give warfighters an advantage, allowing them to close the decision cycle faster. The most recent, in April, was a two-week live fire exercise with the Project Origin platform, a robotic combat vehicle (RCV) surrogate system. In that demo, soldiers remotely controlled the weapon on the vehicle’s platform to fire at line-of-sight and non-line-of-sight targets.

Other experiments have focused on testing emerging technologies to illustrate how RCVs can be integrated with other assets, including UAS, all showing the potential for MUM-T operations to present complex dilemmas to adversaries while increasing soldier survivability.

The U.S. Army isn’t alone in its efforts. Other military branches, organizations and defense companies are also looking into MUM-T and the different ways it might be deployed.

The U.S. Navy, for example, recently completed a virtual demo with Boeing and Northrop Grumman and has included MUM-T as a key future capability in its Unmanned Campaign Plan. Consortiums like iMugs (integrated Modular Unmanned Ground System), created with the goal of developing a standard unmanned ground vehicle (UGV) solution in Europe, are also doing their part to advance MUM-T. The group recently completed a demo led by Milrem Robotics exploring how unmanned systems can support manned operations and what role that might play in different military scenarios.

Those exploring MUM-T for the defense sector can see it making an impact in a variety of applications, but most notably Intelligence, Surveillance, Reconnaissance (ISR), creating a standoff distance that keeps soldiers safe and providing the persistence needed to keep eyes on an area for days at a time. Other applications include search and rescue, convoy operations, combat missions, detection, tracking and anti-submarine warfare, with swarming and operations across multiple domains also on the horizon.

The benefits of MUM-T for the defense sector are clear, with some applications already being deployed and others not that far from becoming a reality.

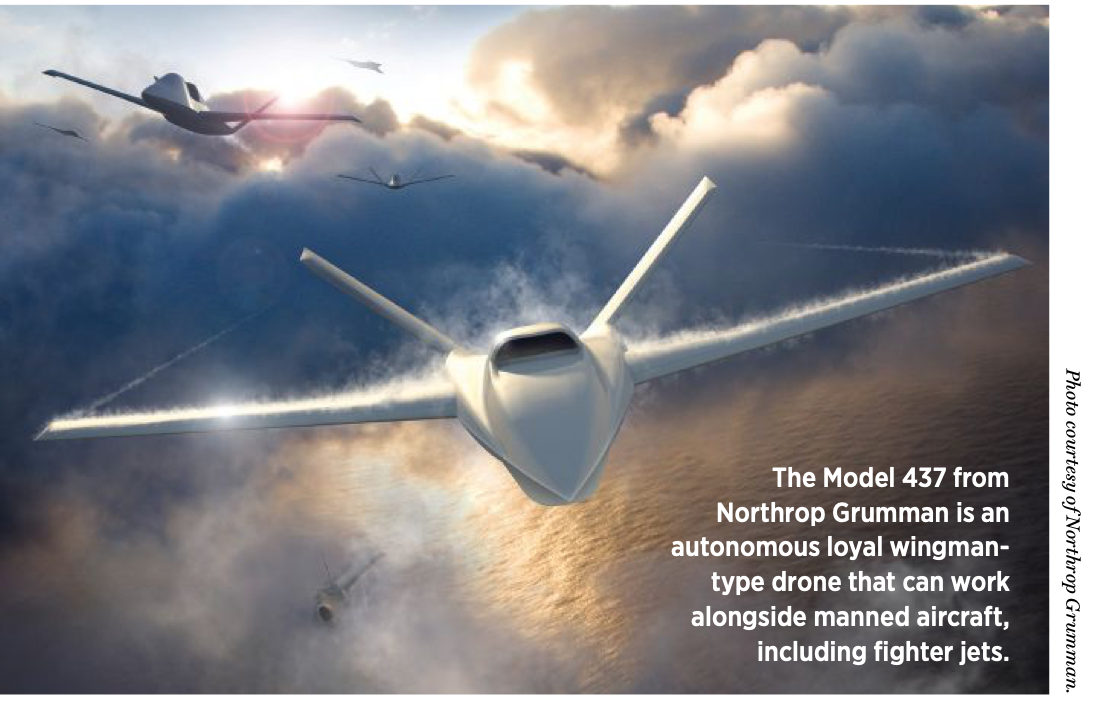

“The global landscape is rapidly evolving and information in the battlespace is moving faster than ever,” said Jane Bishop, sector vice president and general manager, global surveillance, Northrop Grumman Aeronautics Systems. “Manned/unmanned teaming enables rapid action during combat. Successful teaming also has the potential to increase situational awareness and improve combat efficiency and effectiveness for the warfighter.”

MUM-T ACROSS DOMAINS

MUM-T capabilities can present multiple dilemmas and deter adversaries across land, sea and air. What’s possible is “as big as your technical and operational imagination,” said Don “BD” Gaddis, MQ-25 Advanced Design at Boeing.

The MQ-25 Stingray unmanned aerial refueler, for example, if equipped with the right antennas, communication and waveforms, could collect targeting information and then disseminate it across any domain, even subsurface, Gaddis said. The system was recently part of a virtual MUM-T demonstration.

Long-endurance UAS like the MQ- 9B SeaGuardian from General Atomics Aeronautical Systems (GA-ASI) can provide daily pattern of life operations to “condition” the enemy, spokesman C. Mark Brinkley said. The SeaGuardian also was recently part of a demo, this one looking at integrating MUM-T in a maritime patrol and reconnaissance role. The MQ-9A Reaper acted as a surrogate for the SeaGuardian, equipped with an anti-submarine warfare (ASW) mission package and Link 16 data link.

“The data collected in the multidomain environment is provided to surface, air and subsurface assets simultaneously for a real-time, multidimensional common operating picture [COP],” Brinkley said. “This constant surveillance allows for fewer manned sorties at a significantly reduced cost per flight hour, while increasing our understanding of how the threat operates and perceives our actions.”

In scenarios, unmanned systems might track a submarine or a ship, or fly ahead and recon a beach to make sure it’s safe before forces arrive, said Jim Frelk, senior vice president and chief strategic development officer for Clarksburg, Maryland-based Robotic Research.

The team at Airbus Defence and Space is working on a flight demonstration, slated for next year, that will involve manned and unmanned aircraft from different domains, said Aleksander Szwabowicz, UAS and combat air systems marketing manager. The assets will be interconnected in a single network of intelligence, with data provided by the land domain assets visible to the air domain ones and vice versa.

Cooperation between the systems is key, Airbus Head of UAS Marketing Roser Roca-Toha said, because there could be a joint mission that requires one of the drones from the air domain to support an operation for the land domain.

MUM-T will be a key enabler for interoperability when supporting assets across domains in the future, she said.

“We have the land, air and sea domains and every one of them comes with manned and unmanned assets,” Roca-Tohs continued. “Each domain has its own mission group, but in these more complex missions the forces would operate together toward the same objective and need interchanging capabilities to accomplish the mission.”

The information collected from the different networks of drones would go to a decentralized multidomain combat cloud, Roca-Toha said, where it’s easy to access for all actors involved. Data fusion and algorithms will be leveraged to process the data in real time so it can be used to quickly make decisions.

One of the benefits of MUM-T is a better understanding of situational awareness of the battlefield in multidomain operations, said reserve Cptn. Jüri Pajuste, defense research and development director at Milrem Robotics of Tallinn, Estonia.

Manned units can use various aerial and ground-based unmanned systems to create a rapid information flow that gives the commander an opportunity to quickly react to changing situations, Pajuste said. Using these systems also can create stand-off distance between the two opposing sides and act as force multipliers, extending operations and effective fire range.

While there are a number of application opportunities across domains, there are still challenges to overcome before the potential is fully realized, DARPA Squad X Program Manager Phil Root said. One is testing. There will need to be new methods developed to validate that these human-machine teams are acting within the commander’s intent as well as legal, moral and ethical constraints—and that’s difficult to do when you’re sensing in one domain and acting in another.

“To support MUM-T most effectively across all domains,” Bishop said, “we need to be thinking about how to leverage collaborative autonomy software, such as Northrop Grumman’s Distributed Autonomy/Responsive Control (DA/RC), to more effectively manage our autonomous fleets alongside manned assets and prepare them to operate in a constantly changing threat environment, up to and including highly-contested battlespace.”

TECHNOLOGY BEHIND THE COLLABORATION

MUM-T technology is all sensor, algorithm and software-based, and the capabilities are continually advancing, U.S. Army NGCV CFT UAS lead Lt. Col. Ryan Greenawalt said. In the early days, teams were launching one air affect in demos and developing a data link for command and control. Now, two or three systems are being demonstrated at a time, a capability his team recently demonstrated. The ultimate goal is for many nodes to pass on sensor data.

Different sensors, such as ISR for target detection, have been added to the mix, Greenawalt said, and now advances like decoy sensors are coming into play. There’s also movement into electronic warfare and kinetic effects.

Navigation and precise positioning are “critically important” to advancing MUM-T, Frelk of Robotic Research said, particularly in the GPS-denied environments where combat often takes place. Unmanned systems need to know locations of the command structure, dismounts and manned vehicles for coordinated efforts in real time. Robust navigation solutions in both the manned and unmanned assets make that possible.

A higher level of autonomous operations also must be achieved, particularly in challenging environments like underwater or off terrain where it’s difficult to navigate, Frelk said. Systems should be able to make decisions to move around obstacles without relying on an operator to tell it what to do, which is where AI comes into play.

The network that allows the systems to communicate is also key, and one of the main challenges, Wallace said. The U.S. Army is doing a lot of work in this area and seeing promise. This is a complex issue as networks must be resilient, cyber-hardened, capable of supporting the bandwidth required and robust enough to maintain assured control.

But as autonomy increases, he said, the network requirements come down—which is where AI has an impact. If the unmanned asset can move from point A to point B with fewer commands, that’s less information that needs to be sent through the network.

A variety of secure communications capabilities are available including mesh networks, Frelk said, which can translate between other communication forms and capabilities. That overall management ensures secure communication between assets, particularly in harsh environments.

“Mesh networks become more important as you want a more coordinated activity of shared information like position and knowledge,” Frelk explained. “Manned and unmanned systems want to see where their robotic teammates are and what they’re discovering. You want them to share maps with each other and to say I’ve collected this information here, you don’t need to go there. And if one system goes down it becomes more resilient to comms losses because you can mesh to get the information back through any number of avenues with unmanned or manned systems.”

Airbus recently completed a demonstration use case with the German Air Force where an aircraft commanded a UAS to investigate a threat while respecting air corridors, airspace and mission limitations, Szwabowicz said. A mesh networking data link made collaboration possible.

Northrop Grumman demonstrated a data link for connecting aircraft in highly contested airspace, one capable of long-range command and control through an open architecture network. The flight demonstration linked the Scaled Composites Proteus, a HALE (High Altitude Long Endurance) research aircraft, with a Firebird, a drone with the capability to be flown manned, through an advanced line-of-sight data link with low probability of intercept/low probability of detection characteristics that includes anti-jam properties.

Bishop described the demonstration as a “critical milestone in the evolution of a distributed multidomain battle management command and control architecture that maintains decision superiority for the U.S. military and allies.”

Communication relays also play an important role. GA-ASI used various onboard sensors for its recent demonstration as well as data fusion tools, Brinkley said. KOR- 24A Link 16 Standard Tactical Terminal was the primary integrator for all fleets, and it demonstrated that providing L 16 relay to a disaggregated fleet over several hundred miles extends operational reach and situational awareness.

“The MQ-9 was able to generate surface and subsurface tracks with all sensors and push those integrated tracks over the Link to participating air and surface assets,” he said. “They, in turn, were able to use those tracks for target solutions.”

The U.S. Navy is interested in using UAS like the MQ-25 as communications relay, exchanging and targeting information to all manned assets, Gaddis said. This means making sure the UAS is in the right place at the right time and has the radios and wave-forms necessary to communicate with the different manned assets.

Tethering the drone can extend the communication range of forward-deployed troops by linking radio stations, Pajuste said. Milrem Robotics effectively deployed a tethered drone as part of a demonstration with the Estonian Defence Forces, playing out two different scenarios.

In the first scenario, the company’s THeMIS UGV was integrated with Acecore’s tethered drone. The UGV was controlled BVLOS to its observation position while the tethered drone was used to detect and target the adversary. After detection by the tethered UAS, indirect fire was ordered to eliminate the threat. After target engagement, battle damage and effect on the target were assessed via the tethered drone’s footage.

In the second scenario, the THeMIS UGV was used to retrieve a casualty from a crashed vehicle and subsequently retrieve the vehicle; meanwhile, the drone provided overwatch and situational awareness.

“If the unit is being fired upon, the UAS equipped with an acoustic sensor can detect from what direction and distance the shots were made from,” Pajuste said. “Unmanned systems, with their sensors, can help the soldiers locate and react to the threat faster, thereby reducing the likelihood of casualties.”

DEVELOPING A PLAYBOOK

The key to MUM-T is to break down collaborative behavior into a playbook, Gaddis said, which is basically a list of commands the manned aircraft sends to the unmanned aircraft. The command can be as simple as “turn here” or “change orbit length.” The unmanned system receives the command over the data link and uses its autonomy software to go off the pre-planned mission and complete it.

The advantage? Instead of someone commanding the change, which requires getting on the radio and risking being overheard by the enemy, the manned aircraft quickly delivers the message and the UAS makes the adjustment without any further intervention. It also reduces pilot workload in the manned aircraft, which is an important aspect of any MUM-T scenario. The pilot is commanding an outcome, not a single action, and an aircraft like the MQ- 25 determines the steps/actions required to achieve the outcome.

During Boeing’s recent demonstration with Northrop Grumman, the team used existing operational flight program software and data links to communicate between the MQ-25 and the E-2D. They discovered many commands that could be used for advanced teaming are already part of the manned aircraft’s data links, making initial MUM-T capability achievable with minimal change to the crew vehicle interface. These commands then turn into behaviors that become ingrained in the autonomy software.

“When you derive general behavior into lower-level autonomy, you want to be able to use that software code for other vehicles no matter the manufacturer,” Gaddis said. “Having software we can reuse is a key part of manned-unmanned teaming.”

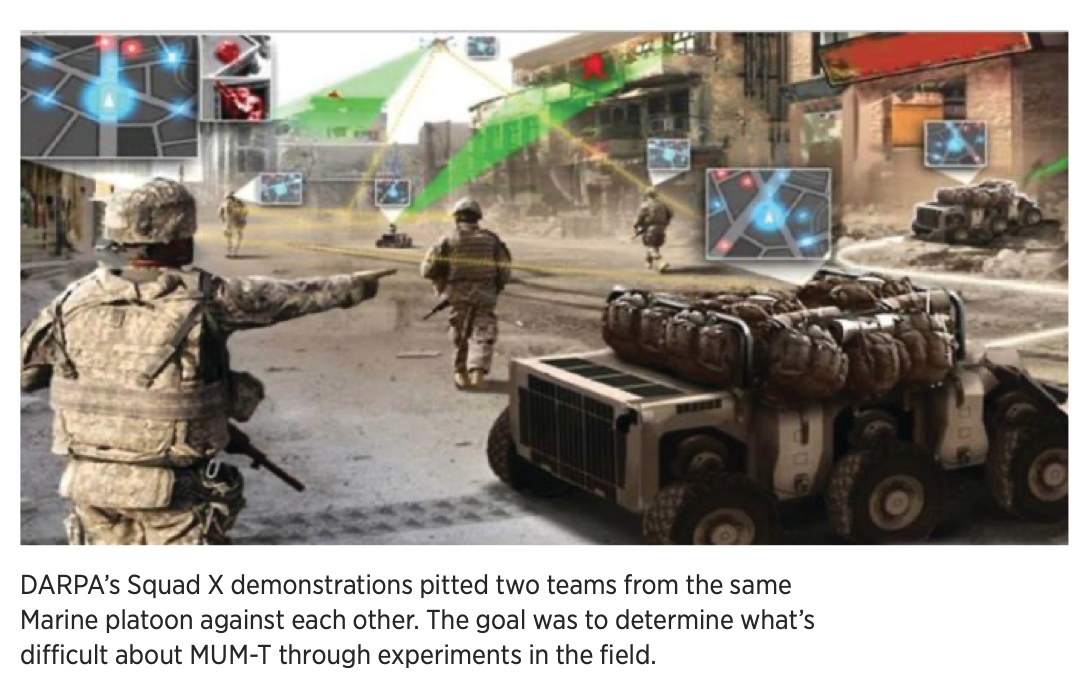

DARPA also looked at shared languages between manned and unmanned vehicles during its Squad X demonstrations, which pitted two teams from the same Marine platoon against each other, Root said. Focusing on smaller units, the goal was to determine what’s difficult about MUM-T through experiments in the field. The teams used technology to intentionally overload the squad leader, making it necessary to rely on Squad X capabilities to win the fight.

AI was trained and employed to help all members of the squad estimate the enemy’s course of action, Root said. This gives the robots as members of the squad a better understanding of the enemy so they can shift focus when needed without being commanded—creating that shared language that’s critical to MUM-T.

Shared sensing is also key, Root said, with an enhanced database taking every battlefield observation from every sensor and sharing that broadly. Tools extend that sensing so the machines understand what the enemy is doing and can proactively take appropriate action. AI and machine learning are essential to making that happen, but getting there will take a lot of training and experimentation.

MORE ROBOTS, FEWER HUMANS

The Army’s current pilot UAS workload is human in the loop, for the most part, Greenawalt said, meaning the systems are relaying information but aren’t sorting through it to make sure the most relevant is being passed along—and sometimes what commanders receive can be overwhelming. The goal is to change that to human on the loop, where AI is analyzing the data to ensure commanders get the bare minimum information to make quick decisions.

This is key to swarming in MUM-T, Greenawalt said, where multiple assets will pass on large amounts of data.

The goal is for one pilot to control not just one drone but a group of semi-autonomous drones. Through AI, when the commander tasks a group of drones, the UAS will determine the right system to perform each task based on positions, payloads and capabilities, Szwabowicz said. To do that, the drones will need to work on the system of systems level in the complete network of interconnected assets.

The systems must have the intelligence to be predictive rather than reactive, Frelk said. With something as simple as the company’s U.S. Army’s Expedient Leader-Follower program, where one manned vehicle leads a convoy of UGVs (an application already being deployed), the vehicles must follow each other along the road and know how to identify and react to obstacles such as pedestrians. AI helps the vehicle make those determinations, allowing the systems to operate more autonomously.

UGVs continue to get smarter and have less involvement, Pajuste said. The basic movement on the ground will be more automated, allowing platforms to navigate where they need to go and complete tasks on their own. That’s already happening with UAS, but it is more of a challenge for UGVs that must learn to identify and avoid objects in their path in complex environments.

While humans will be in control of making firing decisions regardless of the domain, UGVs will eventually have more autonomy to navigate the battlefield on their own. This also reduces the need for a human to communicate with the vehicle, meaning there’s less opportunity for systems to be suppressed by an enemy.

AI and machine learning are part of future capability sets and are being advanced across the industry, Gaddis said, but it’s also important to “continue building basic MUM-T capability now using trusted, deterministic behaviors so that the manned aircraft knows exactly what the unmanned aircraft is going to do in each scenario.”

LOOKING AHEAD

Making MUM-T successful will take a paradigm shift. Instead of people controlling the asset, Roca-Toha said, they’ll have to trust the AI to make some of the decisions. It will be a challenge to accept the new responsibilities between man and machine, but that will result in increased efficiencies and saved lives while preserving manned assets for the most critical decisions and missions. Importantly, a human pilot will always be in the loop for critical decisions such as weapon engagement.

As MUM-T becomes more robust, it will allow commanders to recast human troops to more critical tasks, Wallace said. Actionable information will be gathered without exposing them to tactile risk. They’ll be able to preserve human combat power while still developing a common operating picture. Streamlining and off loading processing to robots, leveraging AI, will also significantly improve effectiveness and eliminate the human error that’s common during chaotic situations.

MUM-T will see limited fielding in a few Army formations at first, Wallace said; that will expand as trust is built with the machines and there’s a better understanding of the associated challenges.

As platforms continue to evolve, systems will have the level of connectivity and sensor technology needed built in to increase coordination, Roca-Toha said. However, MUM-T will continue to be added to existing in-service aircraft as well.

Roca-Toha also sees more collaboration across different types of systems, with multiple unmanned helicopters communicating with manned land or sea assets.

“Think about incorporating command and control elements and tasking a swarm of drones from a ship or a land vehicle,” she said. “So, as we look to the future, we have to understand all the applications of MUM-T. We’re starting with the air-to-air approach, but eventually other domains will play a very active role with interoperable and interchangeable operations across sea, land and air.”